The first step is to import the classes you need. Without further do, let’s begin! Step 1: Make the Imports Don’t forget, your goal is to code the following DAG:

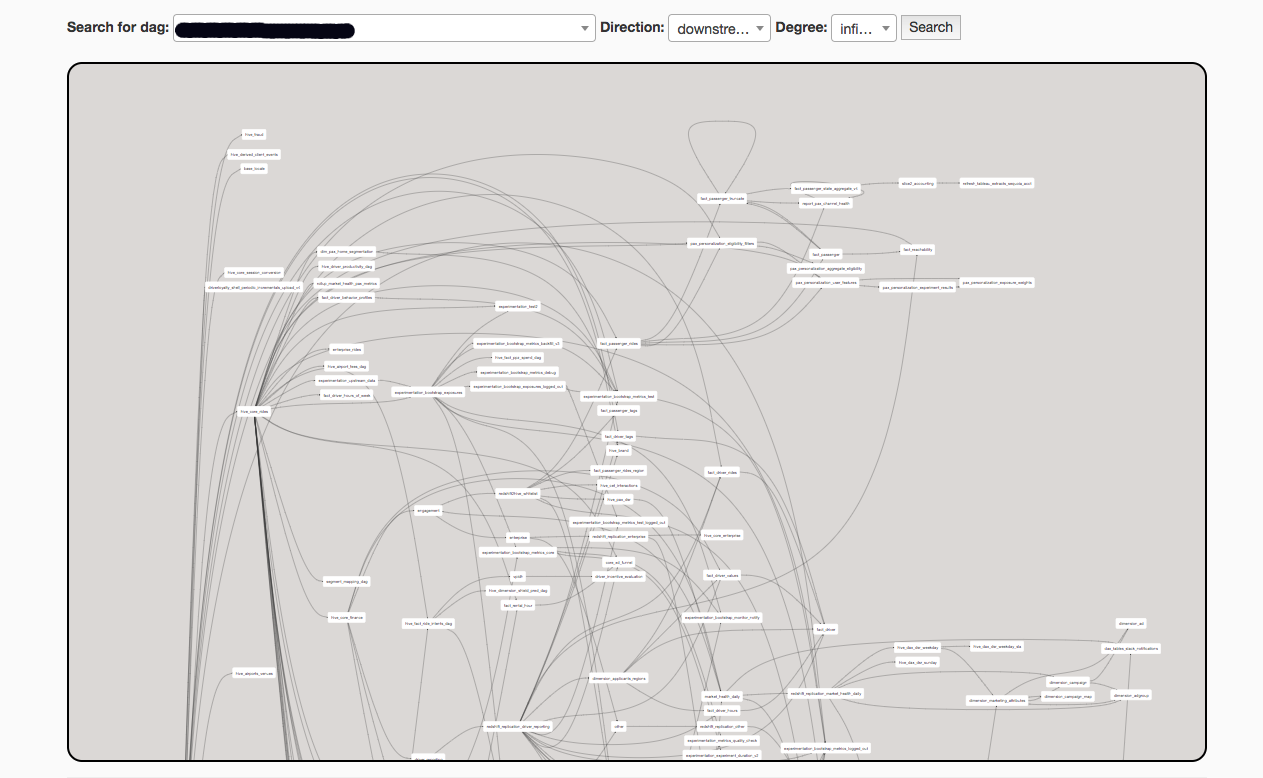

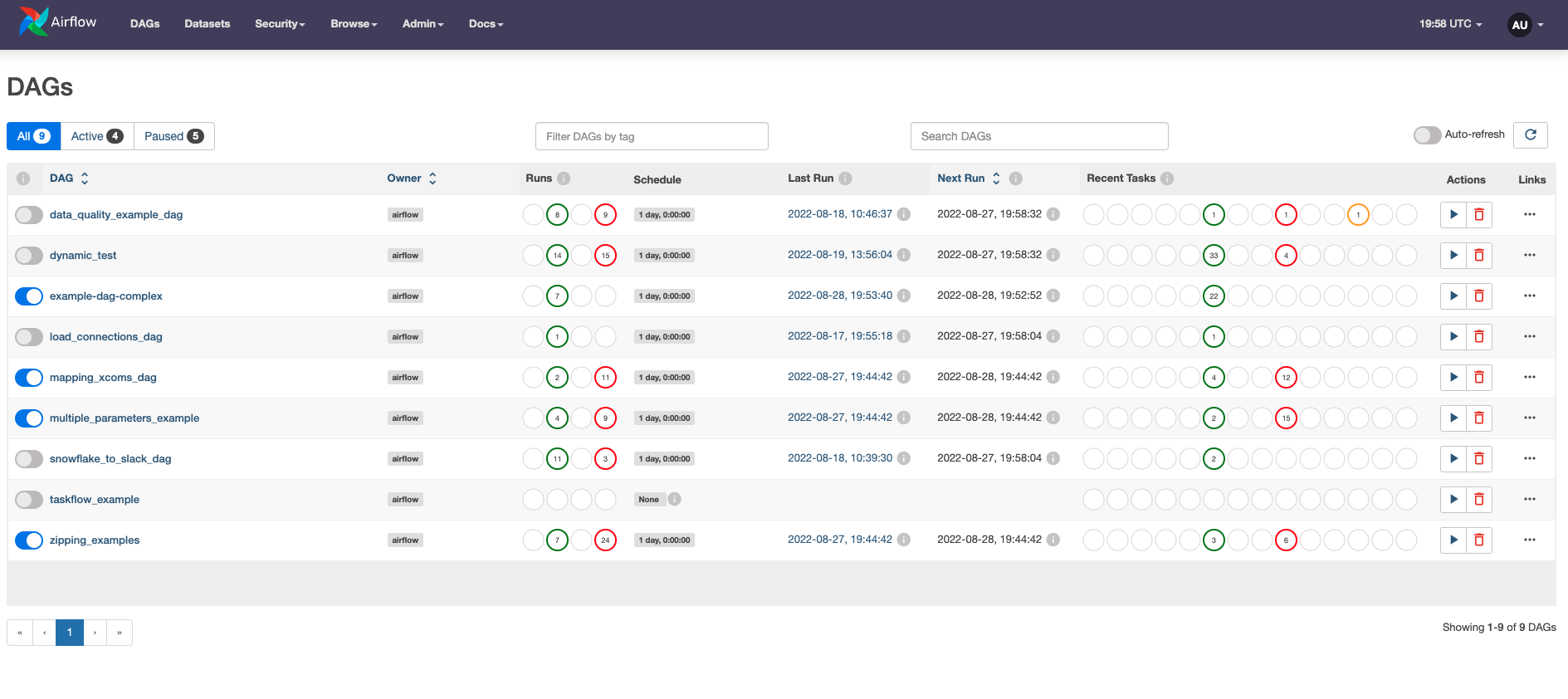

There are 4 steps to follow to create a data pipeline. Don’t worry, we will come back at dependencies.Īll right, now you got the terminologies, time to dive into the code! Adios boring part □ Coding your first Airflow DAG On the second line we say that task_a is an upstream task of task_b. In the example, on the first line we say that task_b is a downstream task to task_a. Task_b > and << respectively mean “right bitshift” and “left bitshift” or “set downstream task” and “set upstream task”. Basically, if you want to say “Task A is executed before Task B”, you have to defined the corresponding dependency. Those directed edges are the dependencies in an Airflow DAG between all of your operators/tasks. Dependencies?Īs you learned, a DAG has directed edges. Time to know how to create the directed edges, or in other words, the dependencies between tasks. You know what is a DAG and what is an Operator. At the end, to know what arguments your Operator needs, the documentation is your friend. With the DummyOperator, there is nothing else to specify. For example, with the BashOperator, you have to pass the bash command to execute. The other arguments to fill in depend on the operator used. Each Operator must have a unique task_id. The task_id is the unique identifier of the operator in the DAG. # The BashOperator is a task to execute a bash commandĪs you can see, an Operator has some arguments. # The DummyOperator is a task and does nothing An example of operators: from import DummyOperatorįrom import BashOperator When an operator is triggered, it becomes a task, and more specifically, a task instance. Airflow brings a ton of operators that you can find here and here. You want to execute a Bash command, you will use the BashOperator. For example, you want to execute a python function, you will use the PythonOperator. An Operator is a class encapsulating the logic of what you want to achieve. In other words, a task in your DAG is an operator. Ok, once you know what is a DAG, the next question is, what is a “Node” in the context of Airflow? What is Airflow Operator? A DAGRun is an instance of your DAG with an execution date in Airflow. Last but not least, when a DAG is triggered, a DAGRun is created. So, whenever you read “DAG”, it means “data pipeline”. Last but not least, a DAG is a data pipeline in Apache Airflow. As Node A depends on Node C which it turn, depends on Node B and itself on Node A, this DAG (which is not) won’t run at all.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed